Intro

SEO & AI search as a full-package service is fundamentally different in 2026 than what it was back in 2015. Google search & LLMs are smart enough to recognize the intent behind queries, so it’s not about exact matching of keywords but rather about how much you demonstrate Authority/Expertise about the topic so Google can create better Knowledge graph for your website.

Think about someone searching for “where to eat lunch in Amman?” at google, what shows actually in search results are pages with titles that go like “best restaurants in Amman”, so it’s no longer about exact matching, but rather about matching the intent behind the search query! That’s what modern ‘semantic search’ is all about.

Yet, you still find some SEO & GEO practitioners still completely relying on tools like semrush/ahrefs for keyword research, whether they’re doing SEO for eCommerce websites or complex (E-E-A-T driven) niches such as Healthcare SEO.

Aside note: Both SEO & GEO are two sides of the same coin, strip away all the myths that claim otherwise!

How to start keywords research from semantic entities?

First Approach: Using TextRazor API

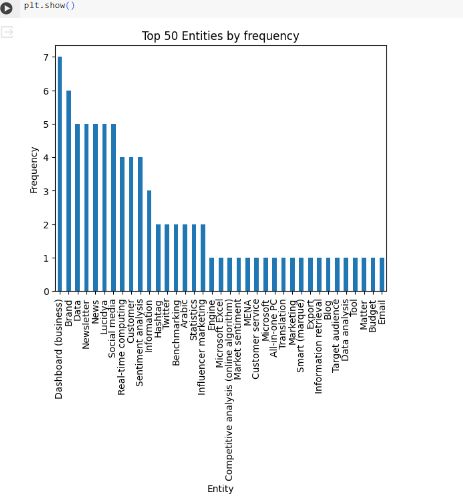

Here is an automated process I’ve developed (using TextRazor) that helps in extracting entities as sub-topics relevant to the primary topic for each pillar page of the website:

1) I’ve used TextRazor API to extract entities of a singular page then demonstrate them using matplotlib chart in python.

2) Check related queries in GSC for most frequent entities and organize them in a sheet with metrics from GSC/ahrefs such as: clicks/impressions, position, Search volume and CPC.

3) For competitive niches, I’d follow the previous step with extracting entities from SERP, saving them to CSV then cleaning the data by filtering out irrelevant entities and domains (if you already benchmarked major competitors using SERP heatmap that would be better).

4) For non-competitive niches, you don’t have to do the previous step and rather extract entities for major 2 or 3 URLs showing in SERP for the focus keyword.

5) Mark the uncovered entities from competitor pages and see what context they are mentioned in. Do further research for relevant keywords for each entity and take ideas how to cover them in your pillar page (H2, H3 or maybe FAQs).

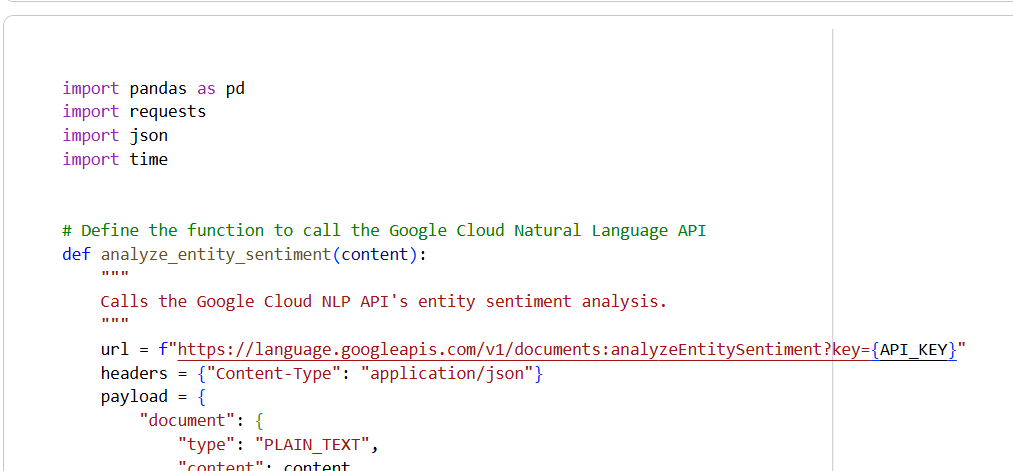

Second Approach: Using Google NLP API

Use Google NLP API embedded into py code for effective Entity Extraction from pages text, works much better than textrazor API mentioned above.

You will notice a strong correlation between (number of entities with salient score > 0.01) extracted from a page’s text and the number of ranking keywords around the main topic, once you benchmark competitors.

Furthermore, I’d highly recommend to use BERTopic in python as it can help in categorizing topics of a certain website, particularly for categorizing the topical landscape of a website and conducting a SWOT analysis – as part of SEO audit scope – within a specific niche against direct competitors.

Want to know more? Click on this link, and feel free to contact me, and i will happily send you the full instructions along with the python code.