Crawl budget isn’t often an issue for SEO service providers, unless it’s big in size, we’re talking here about above 50K and up to 100Ks of pages. So you shouldn’t worry about it, unless your website is huge in size.

But, even if the website is small to medium sized, you can improve the crawl rate for better SEO performance.

How to Diagnose and Fix Crawl Efficiency Issues on Your Website?

First of all, if you think the website is not getting crawled efficiently, try to get access to server log files although not all clients allow that due to security concerns (it’s not necessary in most cases). Export them to excel sheet and split the IP address to a separate column to mark up Google bot. Figure out what pages get most crawls by Google. Or you can use screaming frog log file analyzer as an effective tool for that purpose.

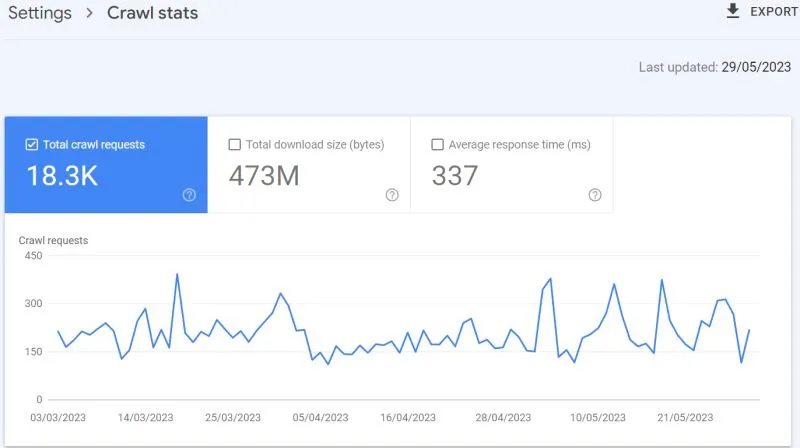

Or to cut it short, in case your website is not big, you can get an insight about the crawl rate from GSC as the screenshot below shows.

Second, run your website on Screaming Frog spider and crawl it. Export pages from the crawl and sort them out in a neat google sheet based on topics. Assign GSC metrics (clicks, impressions, CTR) to each page using APIs or vlookup formula which aggregates data from another GSC’s exported sheet.

Based on the above, spot the dead / low performing pages as they cause content decay later on and dilute the crawl efficiency.

Third, analyze the backlink profile of the website. Export all backlinks data from Ahrefs to see what pages get most backlinks, but after filtering backlinks based on quality metrics (domain traffic, Outbound link ratio, relevancy…etc).

How to clean your index then?

Once the low-performing or dead pages have been identified, they can be pruned by configuring them to return a 403 response code. Unlike typical 404 (not found page), 403 response code instructs google bot to never crawl the page moving forward.

Hint: low CTR page doesn’t necessarily mean it’s low performing or decaying page, the low CTR might be caused by highly competitive niche / market. Pay attention to that before making final decisions what pages to prune. The page might be ranking but getting low clicks due to noise in SERP, A more effective approach would be to enrich the page content through extensive keyword research driven by semantic entities.

Once you identify pages with highest quality links, think how you can leverage internal linking effectively.

Furthermore, BERTopic can be run in parallel processing mode in Python, significantly enhancing the efficiency and depth of topical analysis across an entire website.

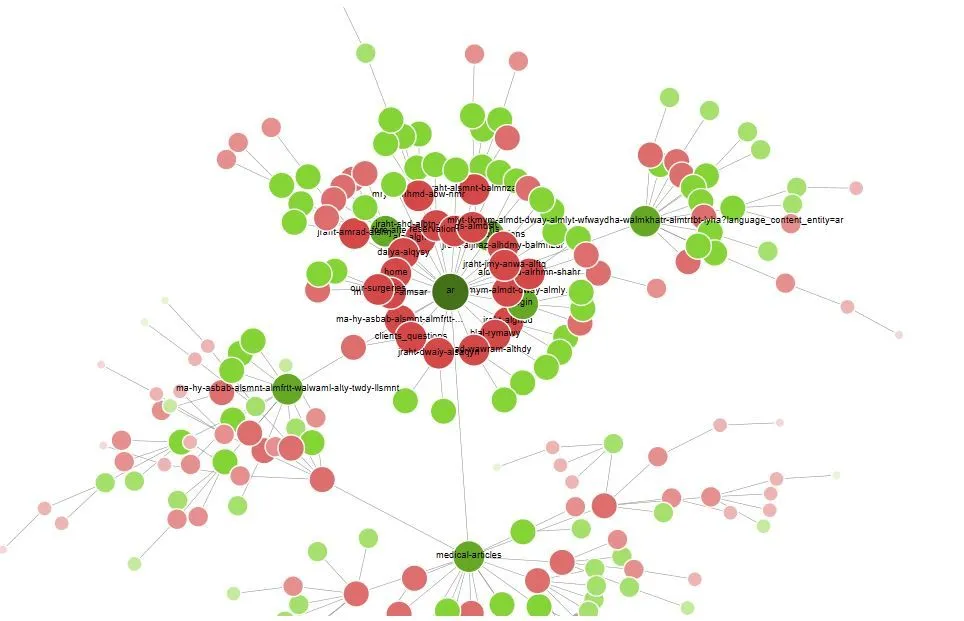

Lastly, check up the site structure and the crawl depth, is it well organized in a solid hierarchy? Use Crawl visualization from Screaming frog as it can give you insights, see the screenshot above. Not only that, you can leverage its new ‘semantic search’ feature for better content audits.